So far, so good, but the experiment by Julian Kelly, Google research scientist, and his team was a demonstration of a method that could one day be used to create a good system for error correction in quantum computing. Quantum computing: Confusion can mask a good story, but don't take anyone's word for it.What you need to know from today's Google IO: Chatty AI, collab tools, TPU v4 chips, quantum computing.South Korea plans large scale quantum cryptography adoption, thanks in part to tech partnership with USA.Quantum Key Distribution: Is it as secure as claimed and what can it offer the enterprise?.

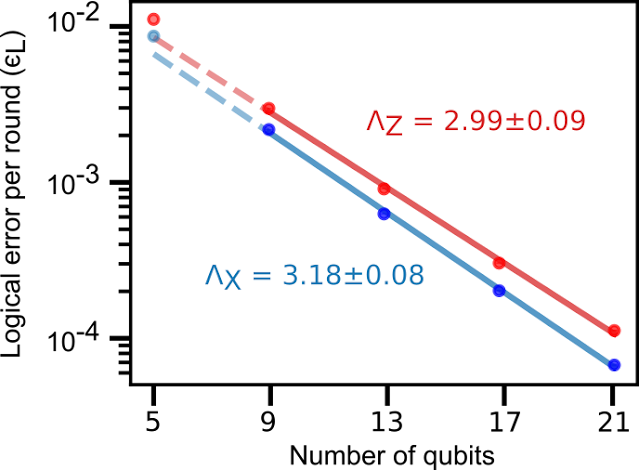

It was also able to demonstrate the error suppression was stable over 50 rounds of correction. In the Chocolate Factory's setup, five to 21 physical qubits were used to represent a logical qubit and, with some post-hoc classical computing, it found that error rates fell exponentially for each additional physical qubit, according to a paper published in Nature this week. Google's approach to the problem is to create a parallel set of qubits "entangled" with the qubits performing the calculation exploiting one of the other strange phenomena of quantum mechanics.Īlthough arrays of physical qubits have been used to represent a single, "logical qubit" before, this is the first time they have been used to calculate errors. Looking inside a qubit is impossible, as pioneer of quantum mechanics Erwin Schrödinger famously imagined when trying to assess the true health of a cat when randomly subjected to a life-threatening quantum event inside a box.

Conventional computers are also prone to errors, but account for them by making copies of bits and performing a comparison. Qubits are notoriously unstable, and susceptible to the slightest environmental interference, but understanding how much error that instability introduces is also difficult. Now, in practical terms, it is difficult to overstate exactly how much heavy lifting the words "in theory" are doing in that last sentence. In theory, as you add qubits, the power of your quantum computer grows exponentially, increasing by 2 n, where n is the number of qubits. That mixed state is known as a "superposition". Each qubit can be 0 and 1, as in classical computing, but can also be in a state where it is both 0 and 1 at the same time. It is not yet an effective system for error correction itselfĪ qubit is the quantum equivalent to a conventional computing bit. a demonstration of a method that could one day be used to create a good system for error correction in quantum computing.

The claim was then hotly contested by IBM, but that is another story. In December 2019, Google claimed quantum supremacy when its 54-qubits processor Sycamore completed a task in 200 seconds that the search giant said would take a classical computer 10,000 years to finish. Google has demonstrated a significant step forward in the error correction in quantum computing – although the method described in a paper this week remains some way off a practical application.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed